- Apply

- Visit

- Request Info

- Give

Colloquium explores ‘wicked problem’ of AI

Faculty and students agree: 'AI is not a replacement'

Written by Noel Teter '24

Published on April 01, 2026

On today’s college campuses, one can hardly go a single day without hearing the letters “AI.” Eastern Connecticut State University recently dedicated a full day to unpacking the impact of artificial intelligence on liberal arts institutions. The colloquium, titled “Liberal Arts Education in the Age of AI,” took place March 24 in the Student Center.

Several faculty and student discussions during the day revealed nuanced and varying opinions on AI, with most discussions relating to generative AI and the extent to which it is used to complete academic work. The colloquium yielded no clear consensus on AI usage, instead allowing students and faculty members to voice concerns and potential benefits regarding the rapidly evolving technology.

Computer science Professor and Department Chair Garrett Dancik served as co-chair of the event. Regardless of AI’s implications, Dancik asserted the importance of Eastern’s Liberal Arts Core (ELAC) curriculum as a means of navigating this unprecedented landscape.

Specifically, Dancik presented each of ELAC’s five key learning outcomes — communication, creativity, ethical reasoning, critical thinking, and quantitative literacy — as a solution to the challenges posed by AI, from creatively and efficiently leveraging its capabilities, to critically and ethically evaluating its outputs.

In agreement, conference co-chair and Dean of Arts and Sciences Emily Todd remarked, “The liberal arts are essential at this time, as they help us ask key questions.”

Guest lecturer: ‘We didn’t ask for this’

Lew Ludwig, a professor of mathematics at Denison University, delivered a guest lecture titled “We Didn’t Ask for This.” Ludwig’s presentation promoted responsible use of generative AI by students and faculty while emphasizing that the emergence of the technology was largely unwanted, or at least unexpected.

Ludwig acknowledged that most educators and students did not ask for the “unregulated, untested, and rapidly evolving” AI landscape. “This technology, [whether we like it or not], is not going away,” he said.

Ludwig advised extending grace to students and junior faculty members, who often hide their AI use for fear of being punished or ostracized. He conceptualized AI as a “wicked problem,” one that cannot be solved but only engaged with.

“Nobody trained us for this,” said Ludwig. “We’re all improvising.”

AI as ‘cognitive augmentation’

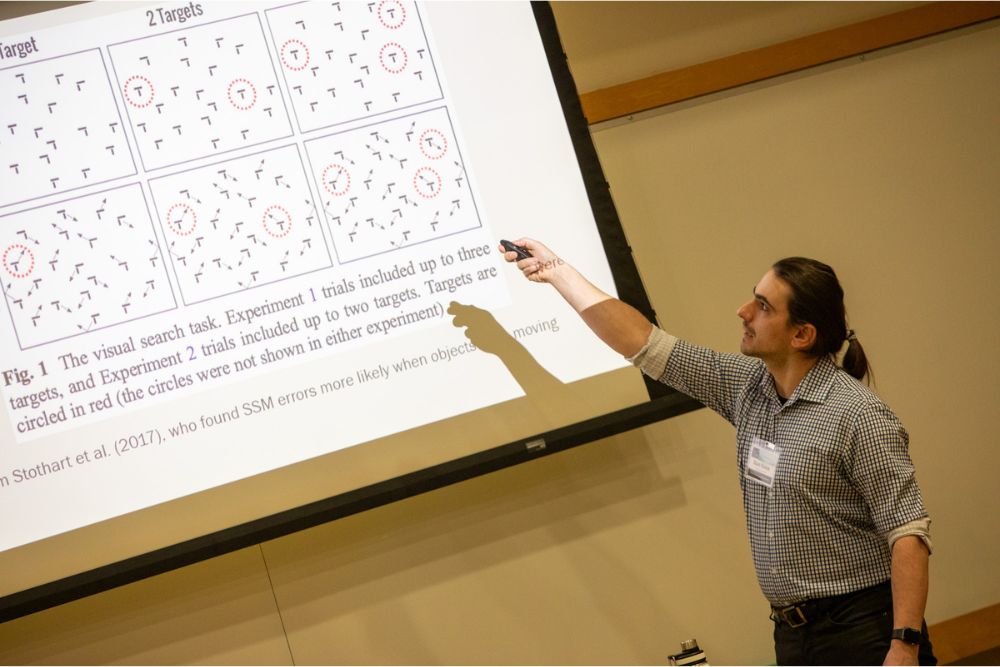

Several Eastern faculty members shared presentations on how AI informs their research process in a session moderated by biology Professor Barbara Murdoch. Psychological science Professor Stanislaw Kolek began the session with a presentation centered on navigating errors that generative AI can make when generating images — in this case, for the purpose of research.

“Using AI is not so easy,” he said. “I’m trying to provide undergraduates with that experience because it’s sought after in the industry.”

Business administration Professor Fatma Pakdil followed with a presentation discussing the negative opinions she once held toward generative AI before realizing that she must adopt responsible use of the technology to keep with methods used in high-level academic journals.

“AI should be used as a research assistant, not a replacement for critical thinking,” said Pakdil.

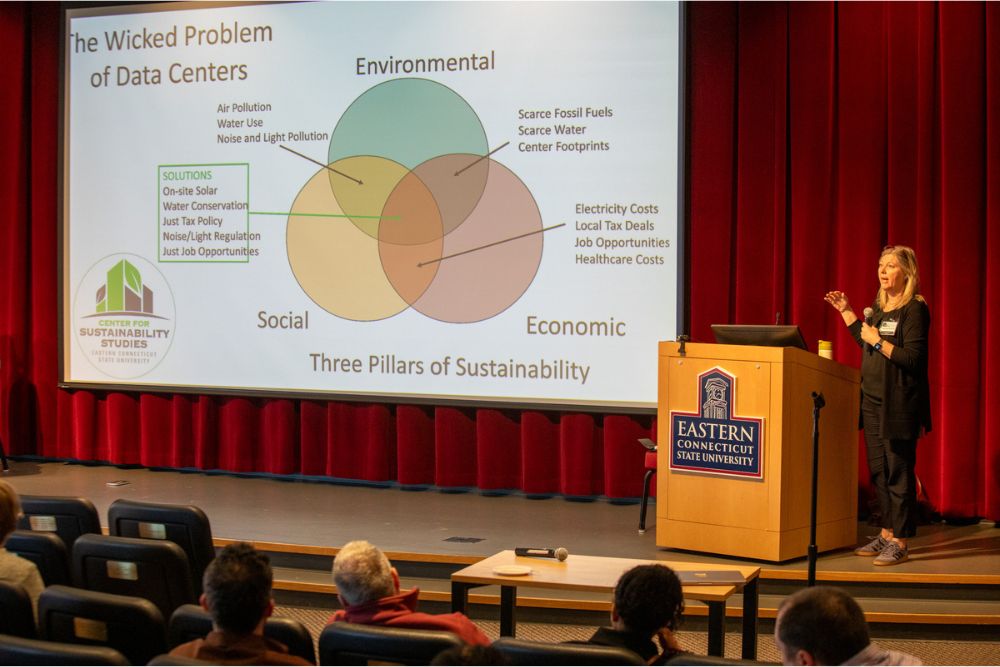

Computer science Lecturer Joel Weymouth concluded the session with a presentation titled “Preserving Human Intellect: Using AI as Cognitive Augmentation.”

Weymouth encouraged attendees to use AI tools as “amplifiers” of learning, rather than “substitutes.” He promoted teaching productive AI use in higher education, which in his view “helps students get started without replacing thinking.”

Panelist perspectives: ‘A mile wide but an inch deep’

As the colloquium continued, an increasing number of criticisms of generative AI were voiced. Five students led a panel discussion, moderated by computer science Professor Garrett Dancik, providing their perspectives on AI’s role in their education.

Communication major Joshua Aziz said, “AI is very good if you want to use it as an expediter.” In creative disciplines, however, AI poses a threat to originality, he said: “It’s not creative; it’s a recycler.”

English major Sean Crisci urged discernment when deciding whether to use AI during a creative process like writing. “You can still write a novel without touching AI,” he said. “It’s up to the individual.”

However, Crisci added that this subjectivity leads to confusion for creatives, which he would rather avoid: “I would rather AI be wrong 100% of the time than be right 90% of the time.”

Biochemistry major Michael Freeman added: “When you pull from its knowledge, it’s a mile wide but an inch deep.”

Data science major Rylee LeClair, a mathematics tutor, expressed frustration over students using AI to solve math problems. “You got the homework assignment right, but now you’re going to fail the test,” she said.

Computer science major Neo Gomes added that cases like these erode human resilience. Due to AI short-cutting students’ learning, Gomes said, “A lot of us don’t have the mental fortitude to do difficult things anymore.”

Following was a faculty panel, moderated by computer science Professor Sarah Tasneem and featuring English Professor Jordan Youngblood and environmental earth science Professor Patricia Szczys, executive director of sustainability.

Youngblood encouraged students to embrace “less efficient means” toward solving problems, adding that he does not use AI, especially for writing, as it “robs you of a voice.” He likened AI usage to participating in a marathon and being transported directly to the finish line.

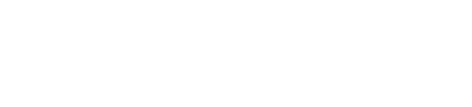

Szczys presented research on AI’s devastating impact on the environment through the construction and operation of data centers, which consume massive amounts of water and electricity. “These are real challenges for everyone in a climate-changed environment,” she said, echoing the concept of AI as a “wicked problem.”

Conversations continue

At a lunch and roundtable session, 10 tables, each headed by a faculty member, centered on a discipline-specific topic related to AI’s impact on the liberal arts.

Media Technology Coordinator Travis Houldcroft led a discussion on AI in the arts. Student Jasmine Shi said that she believes that AI-generated art is not real art: “To make AI art is to take from other artists,” she said, implying that generative AI’s outputs are trained by its inputs.

Political science Lecturer Marisol Garcia led a discussion on discernment in AI use. She explained why she encourages her students to use AI sparingly: “Anything forbidden, they’re more attracted to.”

Professional perspectives

An industry and professional panel explored perspectives on AI from a variety of industries around Connecticut. Candace Carlson, senior director of innovation and informatics operations for Hartford HealthCare, stressed the high-stakes nature of delegating work to AI in a field impacting people’s well-being.

“The only way we can leverage AI is with strict governance,” she said. “We’re all learning together, and that can be dangerous when you’re dealing with people’s lives.”

Jim Haddanin, investigative editor for Connecticut Public, discussed the ways generative AI compromises integrity yet expedites storytelling in investigative journalism. “The speed and prevalence of AI-generated misinformation is a big concern,” he said. “We’re going to be fed boring and repetitive content that may drown out human content.”

Haddanin added that CT Public has AI bots that scan government meetings and public records to highlight possible stories: “AI helps us discover news worth covering by watching government meetings where we don’t have reporters, but final output is by human beings.”

For more information on Eastern’s “Liberal Arts Education in the Age of AI” colloquium, visit: https://www.easternct.edu/liberal-arts-ai-colloquium/index.html